My wife’s first reaction to using the Vision Pro (and Meta Quest 3)

My wife wasn’t super interested in the Vision Pro, but after having it in the house for a few days, she finally said, “fine, let’s see what this is all about.”

So I get her into the headset and she had tow immediate things that stuck out to her:

- The headset is heavy to the point of instant discomfort

- Having to manage a battery pack is immediately annoying

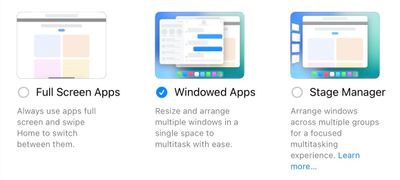

We carried on and she went through the setup process. She did the relatively extensive configuration steps of aligning the lenses and setting up eye tracking by selecting the circle of dots three times. I was watching her the whole time and was immediately struck by how she instinctually reached out into space to pinch the circles in front of her. I told her that she didn’t need to move her hand and could just pinch anywhere in front of her and that just didn’t resonate with her as being intuitive, and she did it, but she clearly didn’t love the idea.

Afterwards, she said it was weird to see something in her space that looked so real and like something she should be able to interact with directly, but that she needed to use her eyes to look at it, her hand to pinch, but that she should never actually touch the thing itself. This odd feeling persisted for the whole demo, and she really didn’t like the idea of needing to look hard at a specific thing to do something with it. My interpretation of what she was feeling is that the indirect manipulation of everything was not intuitive and was a break from the direct manipulation she’s used to on PCs and touch screen devices.

Thankfully, visionOS does let you poke at many parts of the UI to interact with this as well, and eventually she found more luck by walking up to things in space and poking them. Once she started doing this, she was launching apps and doing things in them with much more comfort.

No Vision Pro demo is complete without trying the Explore Dinosaurs app, so I had her open that (by poking the icon) and start the demo (by poking the start button). She instinctively put out a finger for the butterfly to land on, which was cool to see, and the rest of the demo went well. I asked if she wanted to try another app to see an F1 car in our living room and she was like, “nah, I’m good.”

Ultimately, she thought the tech was cool but didn’t immediately see how it was useful. She also doubled down on the look-and-pinch interface being weird and not as intuitive as touching things directly. Out of curiosity, I asked her to try my Quest 3 for a minute to see how she thought interacting with things was in there and she immediately felt more comfortable in that interaction model since the Quest 3 lets you point and pinch with your hand.

This was just a quick first experience with both headsets, but I thought it was interesting to see what someone totally unaware of marketing and tutorial videos for each product would find more intuitive. Of course this is one person who spent a few minutes in each headset, so long term preferences could be different as she gets more used to each product. And doubly of course this is not meant to extrapolate out to everyone, which is why if this was a full UX test we’d interview as many people as we could to get more data points.